Here you can find all publications of the Hydrology & Climate group with links to ZORA.

Scheller, M., van Meerveld, I,. Seibert, J. (2024): How well can people observe the flow state of temporary streams? Frontiers in Environmental Science, 12, 1352697, https://doi.org/10.3389/fenvs.2024.1352697.

In the summer of 2022, we asked more than 1200 people to determine the flow state of a stream using the six icons from the temporary stream category in the CrowdWater app. Additionally, they responded to several yes–no statements about the stream. These surveys were held on 23 days along eight different streams in Switzerland and southern Germany. The goal was to determine how well people can observe the flow state of temporary streams without any additional information. More than 66% of the people chose the same flow state as the majority of the people and 83% chose the same class or the neighboring class. People can distinguish a flowing stream from non-flowing stream well but for the categories in the middle (for example isolated pools) their opinions disagreed. The answers to the yes–no statements regarding the presence of water in the stream were also less consistent than for the other flow states. One reason for the disagreement between the chosen flow states is that participants looked at different parts of the stream or considered different stream lengths. By focusing only on the area of the stream indicated by the circle in the reference picture in the app, the data will be more consistent and thus more useful.

Wang, Z., Seibert, J., van Meerveld, I., Lyu, H., & Zhang, C. (2023). Automatic water-level class estimation from repeated crowd-based photos of streams. Hydrological Sciences Journal, 68(13), 1826–1840. https://doi.org/10.1080/02626667.2023.2240312.

Scheller, M., van Meerveld, I., Sauquet, E., Vis, M., Seibert, J. (2023): Are temporary stream observations useful for calibrating a lumped hydrological model? Journal of Hydrology 632, 130686, https://doi.org/10.1016/j.jhydrol.2024.130686.

We used flow state observations of temporary streams across France to assess if these data are useful for the calibration of a hydrological model and improve the simulated streamflow amounts. Hydrological models are used for water management and for scenario analyses, for example, to assess the impacts of droughts or climate change on streamflow. We assumed that the flow state of temporary streams reflects the groundwater storage, in other words when streams are dry the groundwater levels were low, and when streams are flowing the groundwater levels were high. We calibrated the model for 92 catchments in France based on streamflow data or stream-level data, either with or without the temporary stream data. Although the overall effect of using the temporary stream data on the simulated streamflow was small for most catchments, the model parameters that are related to the low flow simulations were better defined when temporary stream data were used in model calibration. This suggests that temporary stream data are useful for the simulation of streamflow during dry periods.

Blanco Ramírez, S., van Meerveld, I., Seibert, J. (2023): Citizen science approaches for water quality measurements. Science of The Total Environment 897, 165436, https://doi.org/10.1016/j.scitotenv.2023.16543.

In this study, we reviewed the scientific literature on citizen science studies for surface water quality assessments. We examined the parameters monitored, the monitoring tools, and the spatial and temporal resolution of the data collected in these studies. Based on these studies and our own interpretation, we discuss the advantages and disadvantages of the different approaches and methods. We found that many studies focus on data quality but few studies discuss the spatial and temporal resolution of the data (that is where and how often the data are collected) and how this affects how the data can be used in follow up studies

Etter, S., Strobl, B., Seibert, J., van Meerveld, I., Niebert, K., and Stepenuck, K. (2023): Why do people participate in app-based environment focused citizen science projects? Front. Environ. Sci, 11:1105682, https://doi.org/10.3389/fenvs.2023.1105682.

We asked the participants of CrowdWater and Naturkalender why they joined the projects in the first place and why they continue to contribute to the projects. The main reasons to join the projects were to contribute to science, to protect nature, or due to an interest in the topic. For CrowdWater, people were also motivated by being asked to participate. Naturkalender participants and participants in the 50–59-year age group agreed most to enjoying their participation, being outside and active, and learning something new.

Etter, S., Strobl, B., van Meerveld, I., Seibert, J. (2020): Quality and timing of crowd-based water level class observations. Hydrological Processes, https://doi.org/10.1002/hyp.13864.

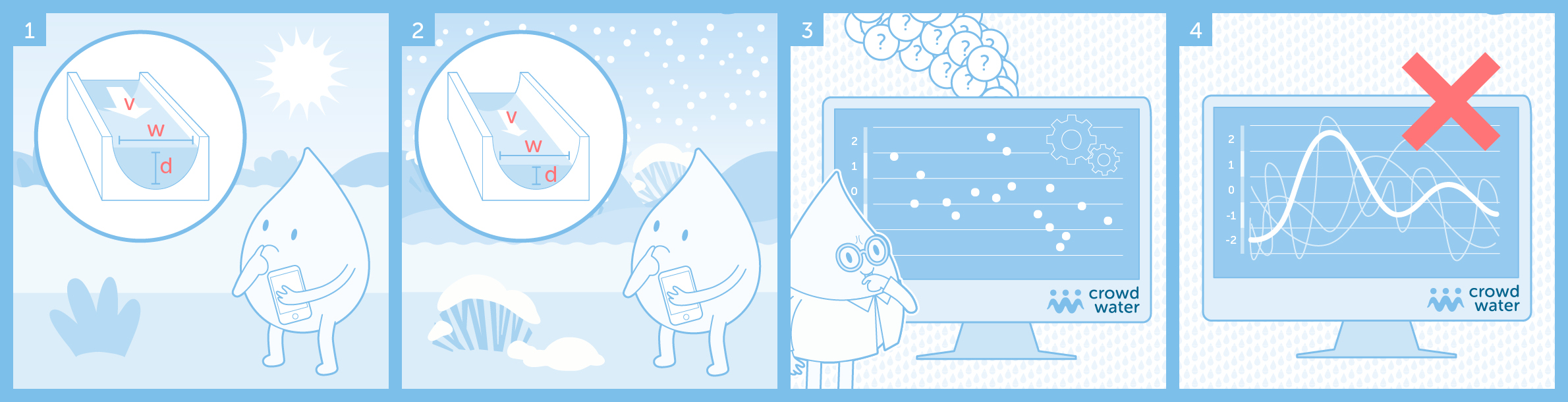

In this study, we checked how well the observations of the category “virtual staff gauge” reflect actual variations in the stream level and when citizen scientists are most likely to report water level class observations. The reported variations in water level classes matched the measured variations in stream level well. The match was better for data collected with the app than for data collected by many different citizen scientists on paper forms. Most water level class observations were submitted between May and September but they covered almost the full range of stream level conditions. These positive results demonstrate that the app and virtual staff gauge can be used to collect useful data on stream level variations. The approach can therefore be used to augment existing streamflow monitoring networks and allow data collection in regions where otherwise no stream level data would be available.

Strobl, B., Etter, S., van Meerveld, I., Seibert, J. (2020): Training citizen scientists through an online game developed for data quality control. Geoscience Communication, https://doi.org/10.5194/gc-3-109-2020, 2020.

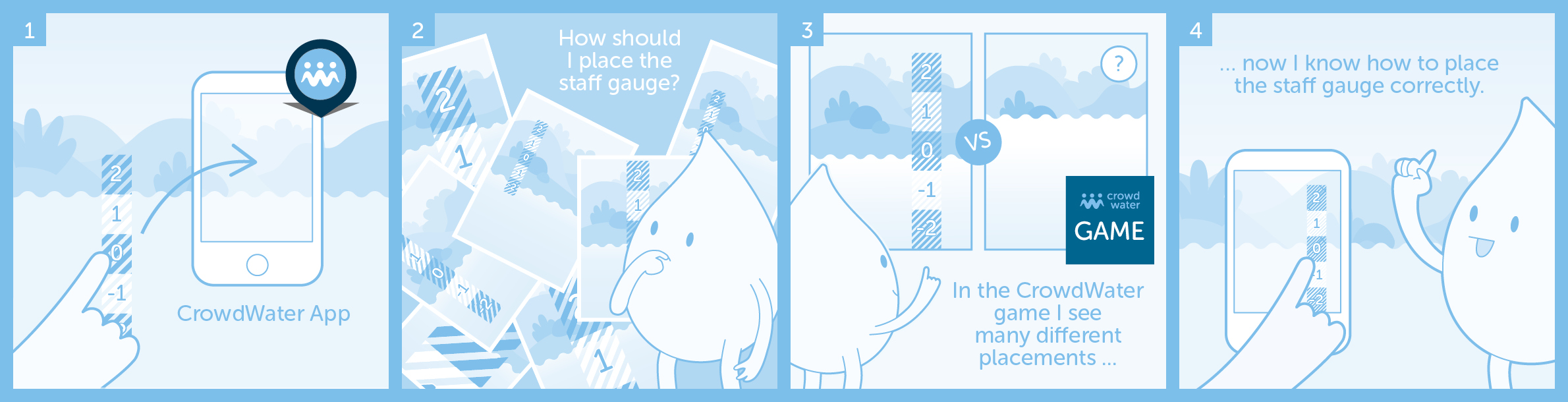

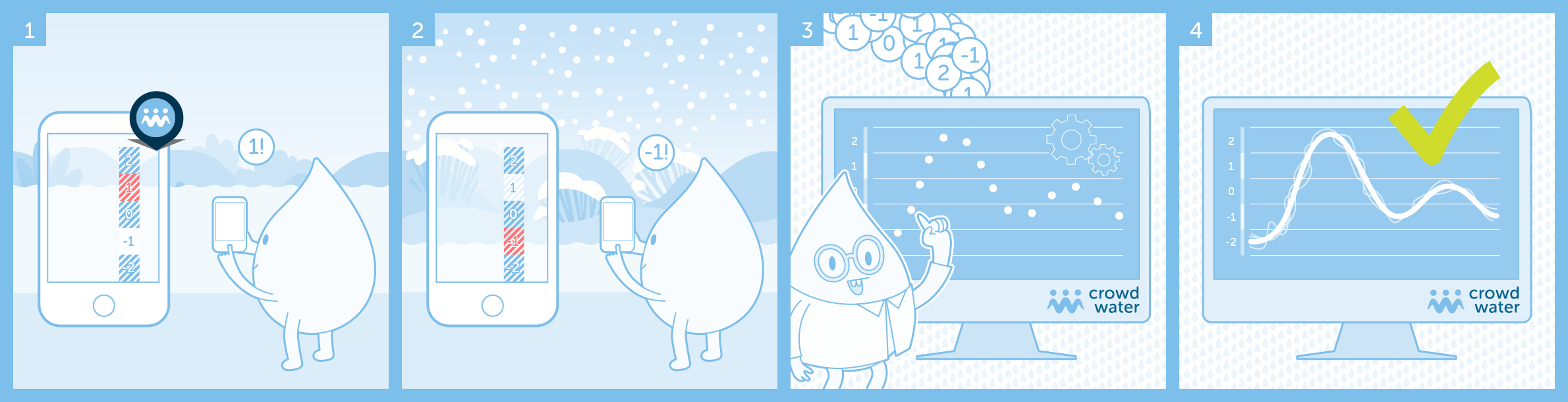

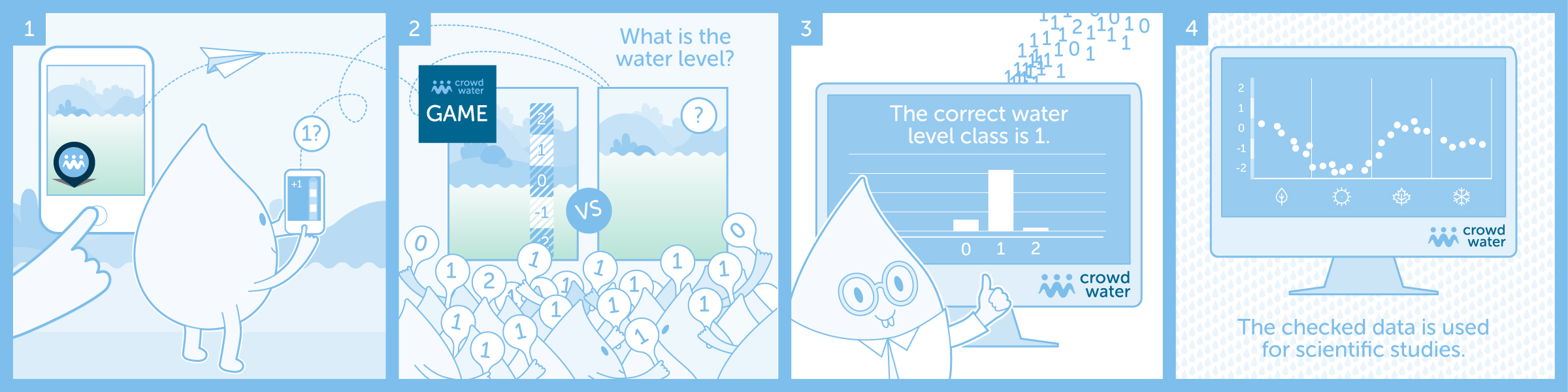

In a previous publication we introduced the CrowdWater game, which checks the quality of the water level class observations submitted by citizen hydrologists using the CrowdWater app. We found that in addition to the quality-control, the CrowdWater game also trains new citizen scientists to better place the virtual staff gauge in the CrowdWater app. The CrowdWater game shows many different virtual staff gauge placements and citizen scientists can gradually learn the benefits and limitations of these placements. If you are already an expert with virtual staff gauges please feel free to still play the CrowdWater game regularly, as this helps us to quality-control the CrowdWater observations.

Link to the paper / Link to the preprint out soon

Link to the paper / Link to the preprint

Etter, S., Strobl, B., Seibert, J., van Meerveld, H. J. (2020): Value of crowd‐based water level class observations for hydrological model calibration. Water Resources Research, 56(2), https://doi.org/10.1029/2019WR026108.

Normally, multiple years of streamflow measurements are used to calibrate a hydrological model for a specific catchment so that it can be used to, for instance, predict floods or droughts. Taking these measurements is expensive and requires a lot of effort. Therefore, such data are often missing, especially in remote areas and developing countries. We investigated the potential value of water level class observations for model calibration. Waterlevel classes can be observed by citizens with the help of a virtual ruler with different classes that is pasted onto a picture of a stream bank as a sticker. We show that one observation per week for 1 year improves model calibration compared to the situation without any streamflow data. The model results for the observations were as good as water level observations that require a physical staff gauge or continuous water level measurements from a sensor installed in the stream. The results were not as good as when streamflow data were used for model calibration, but these are more expensive to collect. Errors in the observations did in most cases not affect the model performance noticeably.

Strobl, B., Etter, S., van Meerveld, I., Seibert, J. (2019): The CrowdWater game: A playful way to improve the accuracy of crowdsourced water level class data. PLoS One, 14(9), https://doi.org/10.1371/journal.pone.0222579.

The CrowdWater game checks the quality of the water level class observations submitted by citizen hydrologists using the CrowdWater app. Players compare two photos submitted via the app, namely the original photo with the virtual staff gauge and another one taken at the same location at a later time. The player compares the water level on the new photo to the virtual staff gauge on the original photo and votes on the water level class. Each observation is shown to several players and will therefore receives multiple votes. The average votes from the different players can then be compared to the value reported by the citizen scientists who submitted the photo via the app. In this way, the CrowdWater game can be used to confirm or correct the data obtained with the app. In the study presented in this paper, we describe the game and demonstrate its value for data quality control.

Are you curious about the game or do you want to help to check and improve the quality of the CrowdWater app data? You can play the game here: https://cwgame.spotteron.net/championship

Link to the paper / Link to the preprint

Seibert, J., Strobl, B., Etter, S., Hummer, P., van Meerveld, H.J.I. (2019): Virtual staff gauges for crowd-based stream level observations. Frontiers in Earth Science – Hydrosphere, 7(70), https://doi.org/10.3389/feart.2019.00070.

Link to the paper / Link to the preprint

Seibert, J., van Meerveld, H.J., Etter, S., Strobl, B., Assendelft, R., Hummer, P. (2019): Wasserdaten sammeln mit dem Smartphone – Wie können Menschen messen, was hydrologische Modelle brauchen? Hydrologie & Wasserbewirtschaftung, 63(2), 74-84, https://doi.org/10.5675/HyWa_2019.2_1.

Etter, S., Strobl, B., Seibert, J., van Meerveld, H. J. I. (2018): Value of uncertain streamflow observations for hydrological modelling. Hydrol. Earth Syst. Sci., 22(10), 5243-5257, https://doi.org/10.5194/hess-22-5243-2018.

In this study, we tested if estimates of streamflow from citizens (rather than actual measurements by government agencies) can be used for the tuning of a hydrological model. Because we didn’t have enough data from the CrowdWater app yet, we created artificial streamflow datasets with data points at different times (for example, one data point per week or one per month) and added different errors to the data. To determine the typical errors in streamflow estimates, we asked 136 people in the Zurich area to estimate the streamflow and compared their estimates to the measured streamflow. We determined for six catchments how the errors in the streamflow estimates and the number of data points affect how well we can tune the model for these catchments. The results show, that the streamflow estimates of untrained citizens are too inaccurate to be useful for the tuning of a model. However, if the errors can be reduced (by about half) through training or filtering, they are useful when there is on average one streamflow estimate per week. Then, the model can be used, in combination with a weather forecast, for flood predictions.

Link to the paper / Link to the preprint

Kampf, S., Strobl, B., Hammond, J., Anenberg, A.,Etter, S., Martin, C., Puntenney-Desmond, K., Seibert, J., van Meerveld, I. (2018): Testing the waters: Mobile apps for crowdsourced streamflow data. Eos, 99, https://doi.org/10.1029/2018EO096355.

van Meerveld, H. J. I., Vis, M. J. P., Seibert, J. (2017): Information content of stream level class data for hydrological model calibration. Hydrol. Earth Syst. Sci., 21(9), 4895-4905, https://doi.org/10.5194/hess-21-4895-2017.

Link to the paper / Link to the preprint

Posters

Catchment Science Gordon Research Conference and Seminar – Ilja van Meerveld

Can citizens observe what models need? Evaluation of the potential value of crowd-sourced stream level observations for hydrological model calibration

Österreichische Citizen Science Konferenz 2018 – Barbara Strobl

CrowdWater als Bereicherung des Unterrichts?

Tag der Hydrologie 2018 – Jan Seibert

CrowdWater – Können Menschen messen was hydrologische Modelle brauchen?

This poster has won the poster price 2018 in the category “most innovative study”.

EGU 2018 – Simon Etter

Can citizens observe what models need?

MOOC

MOOC stands for massive open online course. Like in a traditional university course, learners study a subject over a specific time period. However, students attain lectures, discuss problems and solve exercises online. In the MOOC «Water in Switzerland» learners can watch a choice of lectures and field films, as well as solve assessments and practical tasks. The MOOC is split up into seven modules, each of them taking approximately 3 – 4 hours of work each week.

CrowdWater participated in this MOOC.

Click here to see a trailer for this MOOC or the course website.